Take a look at our newest merchandise

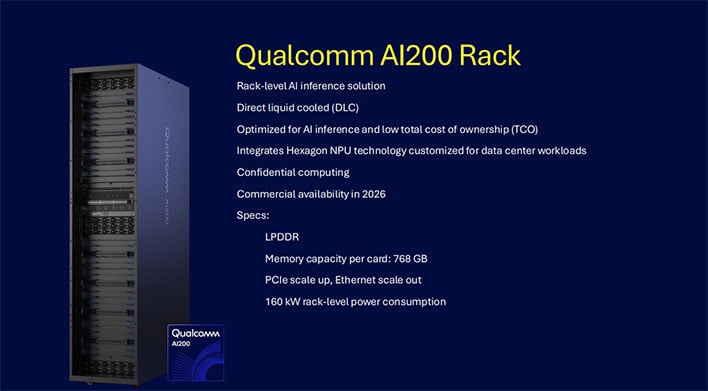

What that boils all the way down to for the AI200 is help for as much as 768GB of LPDDR per card to allow distinctive scale and adaptability for AI inference. Qualcomm’s AI200 rack additionally integrates a hexagonal NPU and, as a complete, is is direct liquid cooled (DLC).

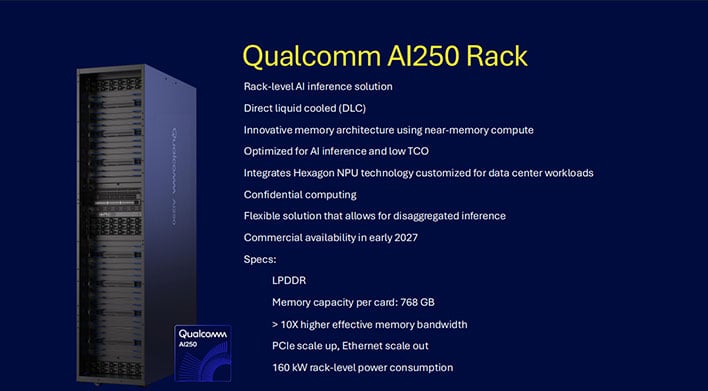

In the meantime, the AI250 affords the identical 768GB reminiscence capability per card whereas additionally introducing what Qualcomm says is an progressive reminiscence structure based mostly on near-memory computing for a generational leap in effectivity and efficiency for AI inference workloads. In accordance with Qualcomm, it delivers greater than 10x increased efficient reminiscence bandwidth, and at a a lot decrease energy consumption.

“With Qualcomm AI200 and AI250, we’re redefining what’s doable for rack-scale AI

inference. These progressive new AI infrastructure options empower clients to deploy

generative AI at unprecedented TCO, whereas sustaining the flexibleness and safety fashionable knowledge

facilities demand,” stated Durga Malladi, SVP & GM, Know-how Planning, Edge Options & Knowledge

Middle, Qualcomm Applied sciences, Inc.

“Our wealthy software program stack and open ecosystem help

make it simpler than ever for builders and enterprises to combine, handle, and scale already

educated AI fashions on our optimized AI inference options. With seamless compatibility for main AI frameworks and one-click mannequin deployment, Qualcomm AI200 and AI250 are

designed for frictionless adoption and fast innovation,” Malladi added.

![[Netflix Certified & Auto Focus] Smart 4K Projector, VGKE 900 ANSI Full HD 1080p WiFi 6 Bluetooth Projector with Dolby Audio, Fully Sealed Dust-Proof/Low Noise/Outdoor/Home/Bedroom](https://i0.wp.com/m.media-amazon.com/images/I/71yY+2ryOZL._AC_SL1500_.jpg?w=300&resize=300,300&ssl=1)

![[Netflix Official & Auto Focus/Keystone] Smart Projector 4K Support, VOPLLS 25000L Native 1080P WiFi 6 Bluetooth Outdoor Projector, 50% Zoom Home Theater Movie Projectors for Bedroom/iOS/Android/PPT](https://i2.wp.com/m.media-amazon.com/images/I/71Emwd78tlL._AC_SL1500_.jpg?w=300&resize=300,300&ssl=1)

![[Win 11&Office 2019] 14″ Rose Gold FHD IPS Display Ultra-Thin Laptop, Celeron J4125 (2.0-2.7GHz), 8GB DDR4 RAM, 1TB SSD, 180° Opening, 2xUSB3.0, WIFI/BT, Perfect for Travel, Study and Work (P1TB)](https://i3.wp.com/m.media-amazon.com/images/I/71CzO7Oc8jL._AC_SL1500_.jpg?w=300&resize=300,300&ssl=1)